AI in cyber security, does it do harm or good? In this week’s Live Cybersecurity Awareness Forum we covered one of the most talked about trends in technology: Artificial Intelligence. It was one of our top attended forums with over one hundred registered attendees. Everyone is talking about AI.

The reason we hold the Live Cyber Security Awareness Forum sessions is to get input from some top cyber security experts on the topic we are discussing. So here are some key opinions from three panelists who are influential in the cybersecurity space:

Fletus Poston (FP) – A returning panelist and security champion, Fletus is a Senior Manager of Security Operations at CrashPlan®. CrashPlan® provides peace of mind through secure, scalable, straightforward endpoint data backup for any organization.

Michelle L. (ML) – First time CSAF panelist, Michelle is the Cyber Security Awareness Lead at Channel 4 in the UK. Channel 4 is a publicly-owned and commercially-funded UK public service broadcaster.

Ryan Healey-Ogden (RH)– Ryan is Click Armor’s Business Development Director. He also teaches at the University of Toronto College Cybersecurity Bootcamp.

And myself, Scott Wright (SW), CEO of Click Armor, the sponsor for this session.

What are the risks associated with broad adoption of AI in cyber security?

ML: One of the things I think of from say, ChatGPT4 and eventually all versions of ChatGPT, is that it could make phishing emails a lot more realistic and it is quite terrifying actually how realistic they are, because all the things we tell people to look out for, like spelling mistakes and bad grammar, they’re not really in those emails.

FP: So, coming from the cyber defense side, I’m not overly concerned at the moment. I do think Michelle is right that as it grows and develops it will cause more issues. Because some of the stuff that we’ve been able to detect and look at and share with our users, is no longer grammar or anything on the typical checklist. It’s now teaching them to look at things like the tone of the sender.

"It’s been a “super-fantastic” experience to see people learning and talking about security threats."

For just $325 USD, you can run a 6 week, automated program for gamified phishing awareness training and challenges. (Limited time offer. Normally valued at $999 USD)

Use Promo Code: 6WEEKS

For example, when the CEO emails you, do they use this tone? Do they use this inflection? I can give Chat GPT a command to tell them to write like my CEO, but most adversaries are just doing prompts. So, it’s going to use a different language than you’re used to seeing in your environment.

RH: Yeah, I’d have to agree with Michelle and Fletus. It’s going to change the focus of security awareness training.

I noticed another issue when speaking to my students who are a part of the new cohort this year. At the end of the first day, we opened up to questions, and the first three questions were all around A.I. and will their jobs even still be there by the time they finish the course?

So, now you already have people that are about to enter the industry being afraid that they may not have an ability to to operate in the industry. We already have a shortage of critical talent in cybersecurity and now we’re seeing AI discouraging people from potentially going down this road because they’re like, well, the computer’s going to do it for me, and what am I going to do to be able to compete against that?

FP: I’m not sure A.I. is ever going to truly get down to the emotional piece of it. Not saying it won’t. I think there are going to be times when it’s going to share information and not be able to read the room on it.

ML: This is an area of security where people really overlook, we call it soft skills as opposed to critical skills and writing. Creating relationships with people is actually very hard and it’s critical. You try out a business, without it, you don’t get very far. So yeah, but I’m not worried about it as doing that part of the job.

S: One of the scary things for me is the advancement of things like deep fakes using A.I. My concern is that we’re going to stop trusting what we see on any media because it can be faked so easily. It’s always going to be a cat and mouse game.

What do you feel will provide the greatest benefit from AI for security awareness?

RH: On the flip side, I think it’s going to help users learn more in real time. It’s going to give them better examples. It’s going to bring more relevance to the training. It’s just a matter of us managing the system to get it to help us rather than letting it be a problem.

ML: I think it makes awareness training more collaborative because something that we’re going to look at (at Channel 4) doing is sending people to Chat GPT and having them tell us which phishing emails would really work. So now, we are collaborating with people instead of the traditional, “Ha! We caught you!” training approach.

FP: I agree, I think awareness managers should use it. For example, you have a very technical document and you want to make it more consumer friendly to digest and you want to take out those technical terms. You can ask it to write it from the point of view of x.

SW: From my point of view, I’ve gotten a lot of good use out of ChatGPT just for helping me come up with complete lists of things. But, if I was going to be trusting it for some critical type of work, I wonder how much trust should I put into it and how do I verify who actually is behind it?

When AI becomes widely adopted, what will employee decisions and security awareness look like?

RH: When I think about the future of AI in the workplace, I wonder at what point will it be our co-pilot? At what point will we be going through our daily tasks and it’s just there, monitoring what we are doing. I imagine it basically being a big brother, hovering over your shoulder to make sure that you’re not going to share something that is PII or internal source, or you’re not going to click on an email you shouldn’t. I wonder how AI could actually be leveraged to do that? And what would be the adoption and uptake of that?

SW: I think it’s going to happen because there will be so much automation and outsourcing of things that the decisions that employees are left to make are going to be really important and it won’t just be: should I do this or that?

It’s going to be, “Am I going to allow this input to go into the automation?” or “What happens if the automation just fails and I have to do something on my own?”. So, I think people are going to have to become better individual risk managers rather than just watching out for bad domains and things like that.

RH: It’s like when Google first came out, we all had to learn how to Google things. Now, people in our generation just know how to solve these problems, we just seem to know how to Google ourselves into that solution. It is a successful way of critical thinking. So, now we’re going to have to learn new skills about how to use AI.

FP: I think they’re going to have roles that are dedicated to just using A.I. to build content, to check content, to look for data, and more or less what we used to have for data checkers.

They just announced that Bing is now integrating or Microsoft’s going to integrate their A.I. into their office platform. You can upload an example of a document that you’ve written and it will give you feedback on how to write that document again for your new audience.

So, you have to know what your policy changes are going to look like from an awareness point of view, what your governance and risk are going to look like if you enable that function inside of your office toolset.

SW: I think it’s going to be interesting because I think a lot of people don’t realize that that AI capability exists already or is being built sort of coincident with the actual technology. And it’s going to be a really fast level of integration for a lot of tools that just start leveraging this stuff. And I think a lot of people don’t realize that’s going to come pretty quickly.

How do we protect ourselves?

FP: So, what we’ve already done is just remind my employees and managers that nothing that is considered internal should ever be copy-and-pasted into ChatGPT, Bart, or any of the up and coming beings. If they want to use it to edit something, they can let us know and we can talk through what that looks like.

ML: We have got some soft touch regulation coming. The government here in the UK has just approved some suggestions that there should be some regulation. I’m glad to see it coming because we do need guardrails on these unpaved roads. A lot of people want to rush into using it to show that they are modern. But, I think it’s worth taking some time.

I don’t think there’s anything wrong with saying, hold on a minute, let’s think about this. If we had done that before they invented email, we might be a little better off. Right?

FP: Another analogy I have is from the early 1900s when streetlights were first produced. People were outraged that now there’s going to be lights outside of their houses. They were used to when the sun is up, you go out, and when it’s down, you go home. Can you imagine if we listened and if we did not have a light to light up the environment after the sun went down?

I think AI is like that. We’re all scared because we don’t know the value and we don’t know the benefits. There are risks. There’s risk to having a street light, but it’s the risk value proposition. What is the risk reward going to be of technology?

In the end, it’s going back to the simple philosophy that we talk about all the time: Trust but verify. Do what I mentioned earlier, or ask yourself if it sounds like the person you normally speak to, and then verify it. You can never take it from one source.

–

While AI in cyber security does introduce a new set of risks, it also introduces a larger opportunity for us to advance our training and focus on the bigger picture, from a human risk perspective. I hope this panel inspired you to dive into the world of AI and its associated risks a bit more.

To learn more about the role of AI in Cyber Security, check out the full recording of our panel session. Thank you to our panelists and attendees for tuning in!

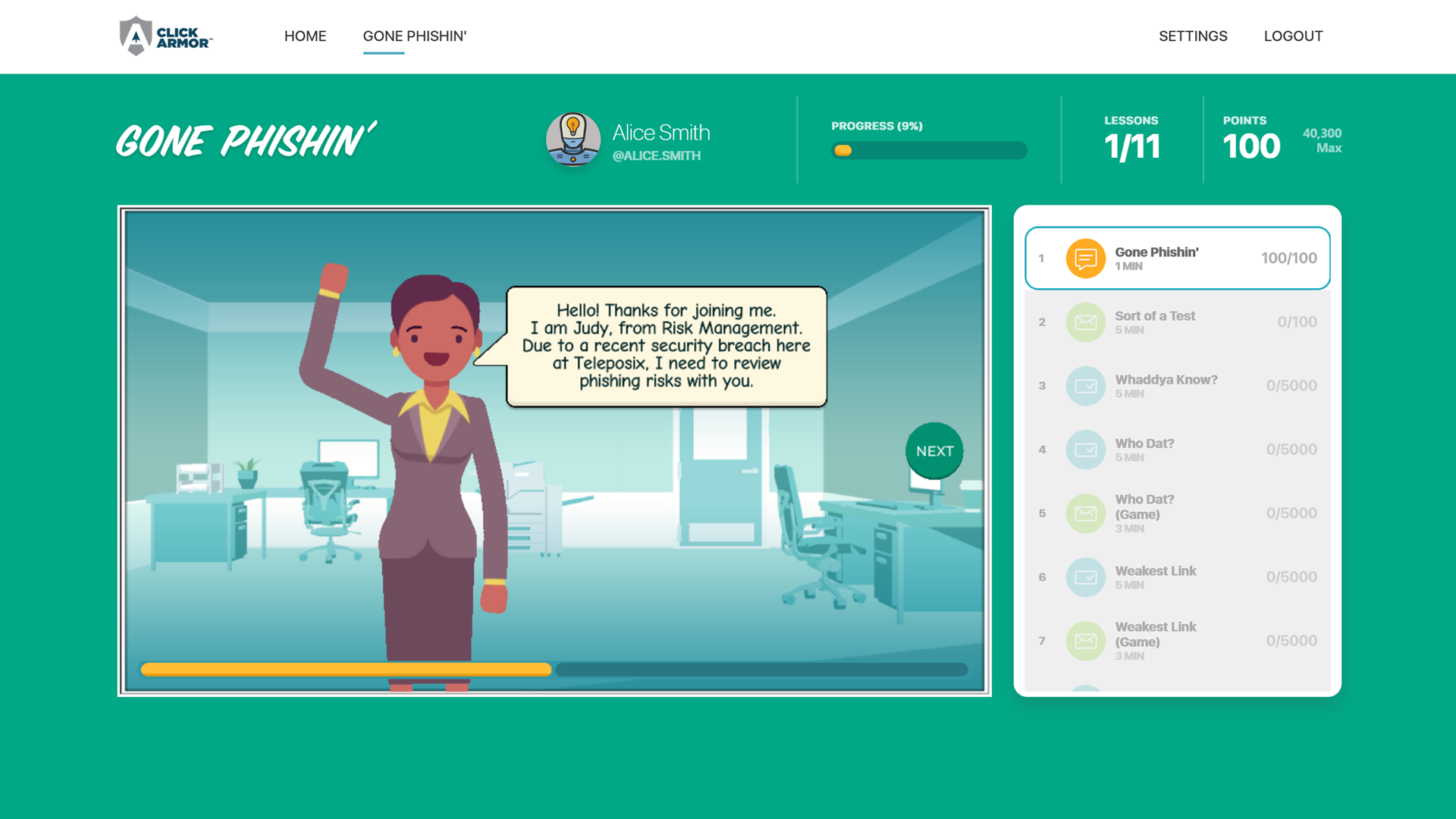

Scott Wright is CEO of Click Armor, the gamified simulation platform that helps businesses avoid breaches by engaging employees to improve their proficiency in making decisions for cyber security risk and corporate compliance. He has over 20 years of cyber security coaching experience and was creator of the Honey Stick Project for Smartphones as a demonstration in measuring human vulnerabilities.